|

12/19/2023 0 Comments Ec2 autoscale based on sqs queue

The repository also includes a README file with detailed instructions that you can follow to deploy the solution.

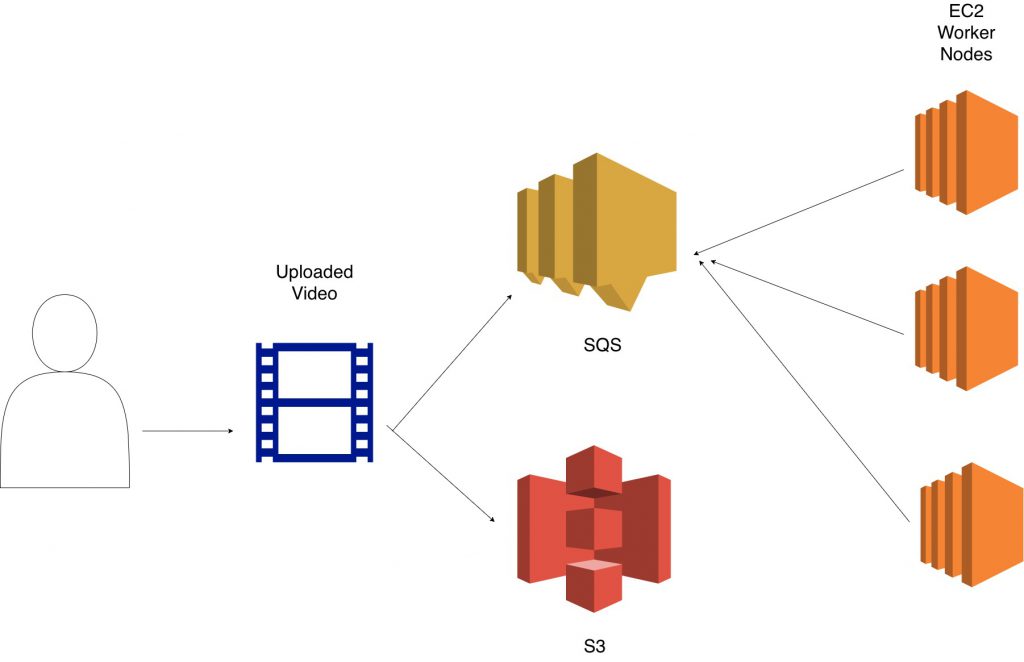

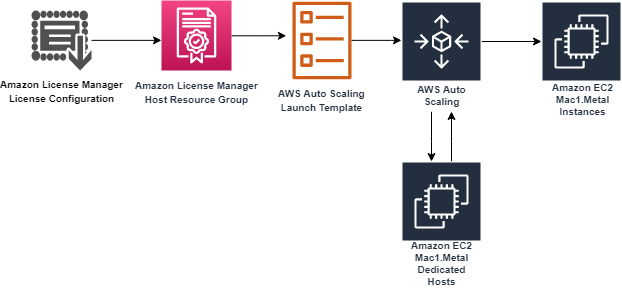

We also provide an automated deployment solution for this architecture using an AWS Serverless Application Model (AWS SAM) template and some Python code ( repository link). To demonstrate how the approach above can be implemented in practice, we put together an example architecture highlighting the services involved (see the following figure). This allows the scaling policy to adapt to variations in the average MPD over time, and thus enables the application to honor its acceptable latency. Therefore, the solution we propose here works by monitoring the average MPD over time and dynamically adjusting the target value of the Auto Scaling group’s target tracking policy (acceptable BPI) based on the observed changes in the MPD. Given the acceptable BPI equation, a longer MPD requires us to use a smaller target value if we are to process these messages in the same acceptable latency, and vice versa.

When scaling in response to an Amazon SQS backlog, it’s good practice to use a scaling metric known as the Backlog Per Instance (BPI) and a target value based on the acceptable BPI. A target tracking scaling policy works by adjusting the capacity to keep a scaling metric at, or close to, the specified target value. Solution overviewīefore we dive into the solution, let’s briefly review the target tracking policy’s scaling metric and its corresponding target value. This includes image processing, document processing, computational jobs, and others. Applications where the processing duration scales with input data size are particularly vulnerable to this problem. Therefore, the challenge is common to any latency-sensitive application where the MPD is subject to change. As a result, the application fails to process the latter images within its acceptable latency. As in the first week, the Auto Scaling group scales to 10 instances, but this time each instance processes 100 images in 200s, which is twice as long as was needed in the first round. Since an image’s processing duration scales with its size, each image takes two seconds to process. In the second week, the customer submits 1000 slightly larger images for processing. Therefore, the Auto Scaling group scales to 10 instances, each of which processes 100 images in 100s, thereby honoring the target latency of 100s. In the first week, the customer submits 1000 images to the Amazon SQS queue for processing, each of which takes one second of processing time. To achieve the target latency mentioned previously, the customer assumes that each image can be processed in one second, and configures the target value of the scaling policy so that the average image backlog per instance is maintained at approximately 100 images. The worker tier consists of an Auto Scaling group configured with a target tracking policy. Latency refers here to the time required for any queue message to be consumed and fully processed.Ĭonsider the example of a customer using a worker tier to process image files (e.g., resizing, rescaling, or transformation) uploaded by users within a target latency of 100 seconds. The key challenge that this post addresses is applications that fail to honor their acceptable/target latency in situations where the MPD varies over time. We also cover the utilization of Amazon EC2 Spot instances, mixed instance policies, and attribute-based instance selection in the Auto Scaling Groups as well as best practice implementation to achieve greater cost savings. Specifically, we demonstrate how to dynamically update the target value of the Auto Scaling group’s target tracking policy based on observed changes in the MPD. This post builds on that guidance to focus on latency-sensitive applications where the MPD varies over time. For latency-sensitive applications, AWS guidance describes a common pattern that allows an Auto Scaling group to scale in response to the backlog of an Amazon SQS queue while accounting for the average message processing duration (MPD) and the application’s desired latency. For example, an EC2 Auto Scaling Group can be used as a worker tier to offload the processing of audio files, images, or other files sent to the queue from an upstream tier (e.g., web tier). Scaling an Amazon EC2 Auto Scaling group based on Amazon Simple Queue Service (Amazon SQS) is a commonly used design pattern in decoupled applications. Specialist Solution Architect, EC2 Flexible Compute. This blog post is written by Wassim Benhallam, Sr Cloud Application Architect AWS WWCO ProServe, and Rajesh Kesaraju, Sr.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed